Gesture Controls for OpenLayers, Google Maps & Leaflet

12 Apr 2026(Updated 10 May 2026)

After creating gesture controlled games as Eyebrow Tetris and Pug’s Hunt I tried controlling a map using just a webcam and hand gestures. No mouse. No keyboard. Just hands. It worked, but also broke in ways I didn’t expect.

Map gesture controls let you pan, zoom, and rotate interactive maps using hand tracking via your webcam. No mouse, no keyboard, no backend. The library runs entirely in the browser using MediaPipe's hand landmarker and works with OpenLayers, Google Maps, and Leaflet.

The idea#

Do you know the movie Minority Report? It’s a movie with Tom Cruise where he prevented crimes in the future. The movie had some nice scenes where he controlled an interface with just his hands.

I wanted to create this, but in the browser. In KNMI I work for a project called OpenGeoweb where we use OpenLayers, and since this is opensource I decided to built a small plugin that lets you control an OpenLayers map with gestures. All running locally in the browser using MediaPipe. No backend. Camera data never leaves the device.

Demo#

Try it yourself here: sanderdesnaijer.github.io/map-gesture-controls

Version 1: the Minority Report prototype#

The first version was simple. Two gestures:

- Fist: pan the map (move your fist around, the map follows)

- Two open hands: zoom (move your hans apart to zoom in, bring them together to zoom out)

The zooming worked like a swimming notion. Hands apart zoom in, hands together zoom in. The pan you could do with 1 fist, and the map was following the fist.

It felt great. I added blue glows on the fingertips, exactly like in the movie so I felt a bit like Tom Cruise. The fist was also very responsive and intuitive.

Posting it on Reddit#

I posted the first version on Reddit. I figured a few people might find it interesting. What I got back was way more useful than I expected. People actually tried it. They reported what worked, what felt weird, and what broke. Some went out of their way to test edge cases and send detailed feedback.

The main feedback and issues:

- Zooming out meant bringing your hands close together. And zooming out *a lot* meant... your hands were already as close as they could get. Worse, zooming in meant spreading your hands wide, and at some point your hands just left the frame. The webcam couldn't see them anymore, so tracking dropped.

- There was no rotation. No reset. I hadn't even thought about those yet. It was just pan and zoom.

Some people even suggested new controls, which I later implemented. That's the thing about open source. You can build something in isolation and think it works fine. But real users find the gaps you'd never notice on your own. The feedback I got pushed the project from a fun experiment into something much more solid.

I was very happy with the feedback, and the people who tested it (even after making changes). When people take time out of their day to test your stuff and give you honest feedback, that means a lot. The least I can do is thank them so I added a special thanks section to the Readme.

Version 2: improving the controls#

Based on the feedback, I reworked the gesture system completely. The current version looks like this:

- Left hand fist or pinch → pan the map

- Right hand fist or pinch → zoom (move hand up to zoom in, down to zoom out)

- Both hands fist or pinch → rotate the map

- Hands together (pray pose, hold 1 second) → reset everything

The big changes: zoom is now single-handed (right hand, vertical movement), so you never run out of screen space. Rotation got added. And there's a proper reset gesture.

Why is single-hand zoom better?#

Instead of tracking the distance between two hands, zoom now uses the vertical position of your right wrist. Move your hand up, zoom in. Move it down, zoom out.

This fixes the screen-space problem completely. You're not limited by how far apart your hands can go. And because it's one hand, your left hand is free for panning, or you can just let it rest.

Adding rotation#

With left and right hands doing separate things, both hands together became available for a new gesture: rotation. Hold both fists and tilt your wrists clockwise or counter-clockwise, and the map rotates. The angle is calculated from the line between your two wrists using atan2.

To prevent accidental rotation when you're just reaching for a two-hand gesture, both hands need to be stable for 3 consecutive frames before the system escalates from pan or zoom into rotate mode.

The problem: why are hand gestures so unreliable?#

The biggest issue wasn't performance. It wasn't even tracking. It was this: Your hands are constantly passing through other gestures.

Example: You move from open hand to fist. In between, your hand is still open. So if your logic says two open hands = reset, you accidentally trigger resets while just trying to move.

The worst offender: "two open palms"#

This sounds like a great gesture: Hold both hands open for 2 seconds, and reset the map. In reality, it didn’t work reliable for the application, because zoom and rotate gestures pass through open hands on the way in and out. Moving your hands naturally often looks like "open palms" to the detector. And even small delays can't save you when the gesture itself is ambiguous. Result: random resets. Constantly.

The solution: the "pray" gesture#

Instead of looking for "common" gestures, I switched to something more intentional. Hands together in the center, like a namaste pose.

Why this works: it's rare during normal interaction, fingers are close together so it doesn't look like "open palm," and you have to intentionally do it. It requires a full 1-second hold before it triggers, with a progress bar so you can see it filling up.

In code terms: both hands must be tracked, wrists need to be close together (within 0.45 normalized screen distance), and neither hand can be in a fist or pinch. If the pose drops briefly, there's a 300ms grace period before the progress resets, so a single lost tracking frame doesn't ruin it.

Much more stable and funny too, whenever you get lost just pray.

Fist vs pinch: why not both?#

Early on I only supported fist gestures. But some people found it uncomfortable to hold a tight fist while moving their hand. So I added pinch (thumb and index finger together) as an alternative trigger for every action. Fist or pinch, both work the same way.

The tricky part was pinch detection. When you're holding a pinch, your fingers hover right at the edge of "touching" and "not touching." So the classifier would flicker between pinch and none on every frame.

The fix: hysteresis. The pinch enters at 25% of hand size (thumb tip to index tip distance) but doesn't release until 35%. That wider release band means once you're pinching, small finger wobbles don't break it.

Gesture design rules I ended up with#

After a lot of trial and error:

- Avoid gestures that appear during transitions. If a gesture shows up while moving between two others, it's unreliable.

- Prefer "intentional" poses. Things users wouldn't accidentally do. The pray gesture works precisely because nobody does it by accident while panning around.

- Left hand and right hand do different things. Splitting actions across hands keeps things unambiguous. Left fist = pan. Right fist = zoom. Both fists = rotate. No confusion about which action is active.

- Guard against noise with dwell timers and escalation frames. A gesture has to be held for at least 80ms before it's confirmed. And when going from a single-hand gesture (pan or zoom) to both hands (rotate), the second hand needs to be stable for 3 consecutive frames. This prevents a single noisy tracking frame from interrupting what you're doing.

- Add grace periods on release. When a gesture ends, the system waits 150ms before dropping back to idle. This smooths over brief tracking dropouts where MediaPipe loses a hand for a frame or two.

The UX tradeoff#

Let's be honest: This is not better than a mouse. But that's not the point. What is interesting:

- It works in the browser with zero install

- Feels surprisingly natural after a minute

- Opens up accessibility and experimental UI ideas (kiosks, exhibits, hands-free interaction)

- Camera data stays local, nothing is sent anywhere

Also, it's just fun to use and gives a quick wow effect.

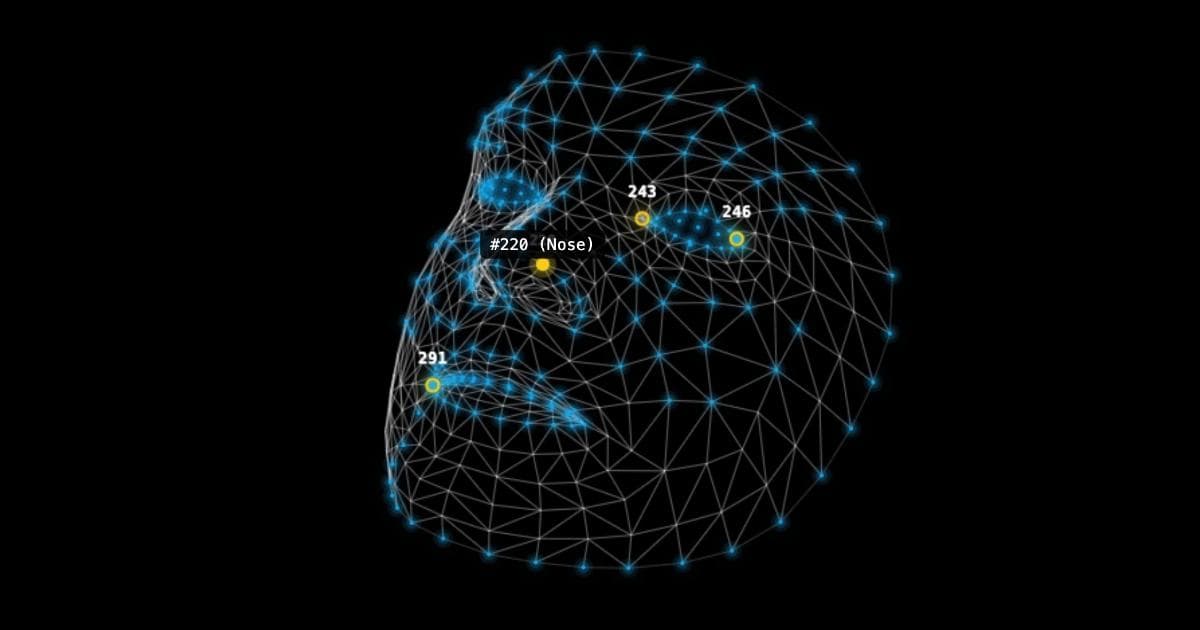

How it works under the hood (short version)#

- Webcam input via

getUserMedia(640x480, front-facing camera) - Hand tracking with MediaPipe Hand Landmarker (WASM, runs on GPU, detects 21 3D landmarks per hand)

- Gesture classification based on finger positions: a fist needs at least 3 of 4 fingers curled, open palm needs all fingers extended AND spread apart, pinch checks thumb-to-index distance with hysteresis

- A state machine turns classified gestures into map actions, with exponential smoothing (EMA) on all movement to filter hand tremor

- Dead zones on pan (10px minimum) and zoom (0.5% minimum distance change) so tiny hand wobbles don't move the map

- Map interaction hooked into OpenLayers via pixel-to-coordinate conversion

Everything runs client-side.

Try it / use it#

Get started with your map library of choice:

Or jump straight to the examples:

Supporting Google Maps and Leaflet#

The gesture engine itself is map-agnostic. All the gesture detection, smoothing, dwell timers, and state machine logic lives in a shared core package. The map-specific packages just translate "move left 30px" or "zoom in 0.5 levels" into the right API calls for each library.

That said, each library has its own quirks.

Google Maps#

Google Maps uses moveCamera() for all operations, which is clean but quantizes values internally. To keep gestures feeling smooth, the adapter tracks zoom and heading as floating-point values between frames and only rounds when passing them to the API. Pan direction is also head-rotated so that moving your hand left always moves the map left, even when the map is rotated.

One requirement: you need a Vector map (set via Map ID in the Google Cloud Console) for rotation to work. Raster maps don't support heading changes.

Install:

Leaflet#

Leaflet doesn't have a native rotation API. Instead of hacking the internals, the adapter creates a CSS-transform wrapper pane inside the DOM. The tile and overlay layers rotate, while markers, tooltips, and popups stay axis-aligned so text remains readable.

For zoom, it hooks into Leaflet's continuous zoom path (when available) so tiles stretch while loading rather than flickering between zoom levels.

Install:

Same API, different maps#

All three packages expose the same interface:

Pick whatever map library your project already uses. The gesture experience is identical across all three.

What’s next#

Currently I’m still hunting for bugs, listen to feedback and see what to improve. Some things on the list:

- Additional gesture types

- Better smoothing of controls

Frequently Asked Questions

- Which map libraries are supported?

The library currently supports OpenLayers, Google Maps (JavaScript API), and Leaflet. Each has its own adapter package, but the gesture detection works identically across all three.

- Does the webcam data leave my device?

No. All hand tracking runs locally in the browser using MediaPipe's WASM module. No images or tracking data are sent anywhere. The camera feed stays on your machine.

- What gestures are available?

Left hand fist or pinch to pan, right hand fist or pinch to zoom (up = in, down = out), both hands to rotate, and hands together in a prayer pose for 1 second to reset. Both fist and pinch are supported for comfort.

- Does it work on mobile?

Yes, it works on mobile browsers with a front-facing camera. The tracking is less reliable than on desktop due to the camera angle, but it's functional. Desktop with a webcam gives the best experience for now.

- Is this better than a mouse?

No, and it's not trying to be. It's useful for kiosk displays, museum exhibits, accessibility scenarios where a mouse isn't practical, and experimental interfaces. Also, it's just fun.

Resources

- Live demo and docs: Gesture-controlled maps

- Source code on Github

- Project overview: Map gesture controls

- Typescript

- Javascript

- Webcam

- WebGL

- MediaPipe

- maps

- Computer vision

- OpenLayers

- Google Maps

- Leaflet

- Open Source

- hand tracking

- gesture controls

- Gesture recognition